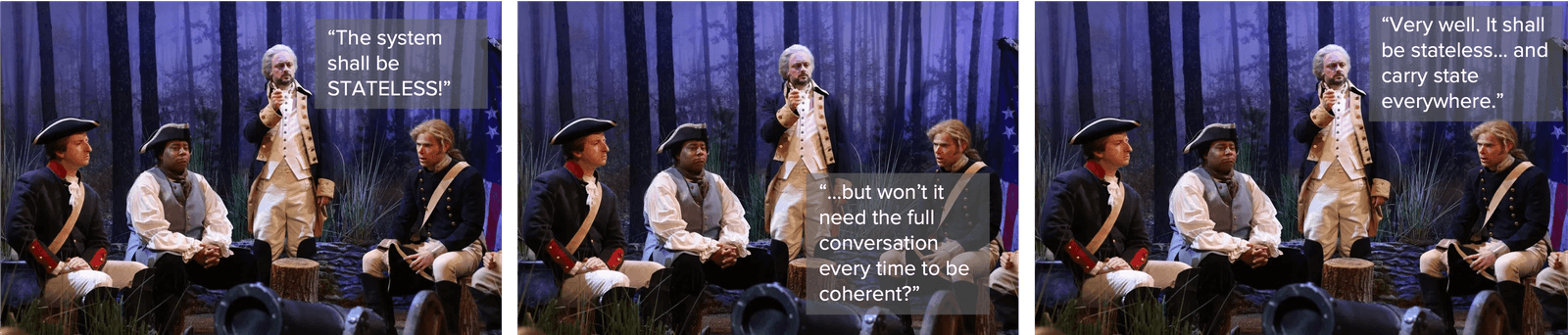

For decades, distributed systems have leaned on the elegance of statelessness as both a design principle and a scaling strategy, a clean abstraction in which each request arrives independent of the last, is processed in isolation, and leaves no residue behind. Stateless systems forget, and in forgetting they gain freedom: freedom to scale horizontally without coordination, freedom to fail without consequence, freedom from the operational burden of memory. It was a good model, and for some applications it served us well.

AI systems, however, expose the fiction.

What we now call “stateless inference” is only stateless in implementation, not in function, because the state has not disappeared, it has merely been displaced. Conversation, reasoning, and meaning depend on prior context, and so that context must persist somewhere. Today, we carry it along inside every request, packed into tokens, replayed repeatedly, reconstructed turn after turn as though the system were reading the entire conversation from the beginning each time it speaks. The architecture claims statelessness, yet operationally it behaves as though state exists everywhere, because it does.

Context, stripped of its softer name, is simply state in motion.

Every request drags history with it, and as conversations lengthen that history grows heavier. Payload sizes expand, network transfer increases, inference latency rises, and compute is repeatedly spent parsing information the model has already seen. Larger context windows and faster hardware postpone the consequences, and clever engineering tricks such as KV caching and prompt compression soften the blow, yet none of these eliminate the fundamental inefficiency of replaying memory instead of maintaining it. The system is remembering by repetition rather than by design, and repetition always extracts a cost.

The cost of a consistent conversation

This cost manifests first as bandwidth, then as compute, and finally as latency, forming a quiet but persistent scaling pressure. Statelessness once simplified scaling because nothing needed to be preserved between calls, yet AI workloads invert this advantage, since preserving continuity is precisely what makes them useful. A system that forgets the past cannot sustain reasoning, cannot maintain conversational coherence, and cannot build upon prior knowledge within a session. In practice, we have built stateful behavior atop stateless infrastructure, and the strain is beginning to show.

The predictable response is session affinity.

If context must persist, then it is more efficient to keep it close to where it was last used, allowing reuse of cached representations and avoiding the repeated transfer and reconstruction of conversational history. Sessions become “sticky,” not by philosophical choice but by operational necessity, because moving state becomes expensive. Latency improves, compute waste diminishes, and the illusion of statelessness weakens further as memory becomes an explicit dependency of performance.

Yet sticky sessions introduce their own friction. Load balancing loses flexibility, failover grows more complex, and distributed mobility is constrained by the location of memory. Systems built for stateless freedom find themselves negotiating stateful gravity. The natural countermeasure is to externalize the state, placing session memory into shared stores, retrieval systems, or context databases that allow any inference node to resume the conversation without replaying its full history. This restores routing flexibility and horizontal scale, but it transforms memory into an architectural component that must be managed with the same rigor as data: consistency, ordering, integrity, and security now matter in ways they did not when context was merely embedded inside a prompt.

At this point, the deeper question emerges, one that reaches beyond optimization into design philosophy: should we continue carrying the entire conversation at all?

We are evolving toward selective state

The current approach treats context as a growing transcript, a continuous scroll of tokens replayed in full, yet machines are not bound to human conversational limits. We already see early movement toward selective memory, where summarization replaces raw history, retrieval replaces repetition, and structured memory distinguishes durable knowledge from transient dialogue. Instead of dragging the entire past forward, systems begin to preserve only what remains relevant, constructing a layered memory in which immediate context, distilled summaries, and persistent knowledge each serve distinct roles. The architecture shifts from remembering everything to remembering intelligently.

This is the real transition underway.

The industry began with stateless compute because compute was scarce and memory was cheap to discard. AI reverses this relationship, elevating context and continuity into first-class architectural concerns. State has never truly vanished; it has only been carried, replayed, and disguised within stateless designs. As AI systems mature, that disguise will fade, and architectures will evolve toward deliberate memory management rather than perpetual context transport.

Sessions will remain sticky because continuity has value. Context will remain clingy because meaning depends on memory. The fiction of statelessness will continue to erode, replaced by systems that explicitly understand what to remember, where to keep it, and when to release it, because the future of scalable AI will not belong to systems that carry state everywhere, but to those that manage it wisely.

About the Author

Lori MacVittie is a Distinguished Engineer and Chief Evangelist in F5’s Office of the CTO with deep expertise in application delivery, automation strategy, and infrastructure. She is known for turning complexity into clarity whether she’s defining guardrails for AI agents, dissecting brittle multicloud architectures, or probing the limits of scalable systems. She brings more than thirty years of industry experience across application development, IT architecture, and network and systems operations. Before joining F5, she served as an award-winning technology editor. MacVittie holds an M.S. in Computer Science and is a prolific author whose publications span security, cloud, and enterprise architecture. She is also an avid tabletop and video gamer with unapologetically strong opinions about cheese.

More blogs by Lori Mac VittieRelated Blog Posts

AI is driving the emergence of new traffic types

AI adoption is creating new first-class traffic types: inference requests plus machine-driven automation traffic and high-volume telemetry traffic that feed control loops.

Sessions are sticky, context is clingy: How inference cheats to maintain conversations

“Stateless” inference isn’t truly stateless—conversation state is hauled along in tokens each request. That replay drives bandwidth, compute, and latency as context grows.

Compression isn’t about speed anymore, it’s about the cost of thinking

In the AI era, compression reduces the cost of thinking—not just bandwidth. Learn how prompt, output, and model compression control expenses in AI inference.

The efficiency trap: tokens, TOON, and the real availability question

Token efficiency in AI is trending, but at what cost? Explore the balance of performance, reliability, and correctness in formats like TOON and natural-language templates.

The top five tech trends to watch in 2026

Explore the top tech trends of 2026, where inference dominates AI, from cost centers and edge deployment to governance, IaaS, and agentic AI interaction loops.

The influence of inference: APIs, DPUs, and context chaos

AI inference reshapes infrastructure, multiplying APIs, stressing compute, and complicating context. Learn why smarter architecture and runtime policy are essential.