AI agents are quickly transforming the way people engage with applications. Rather than navigating websites directly, users are increasingly relying on AI to handle tasks for them. This includes asking agents to read the news, plan business trips, purchase items, or manage accounts. To accomplish these tasks, AI agents browse websites, fill out forms, create accounts, log in, and place orders. From the perspective of an application, this activity appears strikingly human.

This shift signifies a fundamental change in web traffic patterns and challenges traditional security assumptions. Traditional bot management and fraud defenses were designed to distinguish between human users and automated systems. However, agentic AI blurs this distinction. These agents are legitimate and authorized, often providing beneficial services, yet they operate at machine speed and scale. If security controls do not adapt accordingly, they risk blocking legitimate traffic, degrading user experiences, and resulting in actual revenue loss.

A new trust challenge, at agent scale

As AI agents act on behalf of real users and organizations, authorization alone is no longer enough. An agent may be technically allowed to access an application, but that doesn’t mean every action it takes is appropriate, in scope, or economically safe. At scale, intent matters as much as identity.

This creates a new set of challenges as organizations are forced to rethink what trust means when automation behaves like a user. In practice, teams are running into several recurring issues:

- Authorized doesn’t always mean appropriate at scale. AI agents may have valid credentials, but organizations still need ways to enforce intent, scope, and economic limits rather than relying on binary “allow or deny” decisions.

- The trusted entity list is exploding. Applications already support hundreds of known beneficial bots. As AI agents capable of browsing, buying, and creating accounts proliferate, the number of trusted automated entities continues to grow rapidly.

- Legitimate agents are mistaken for abuse. Without agent‑aware visibility, beneficial automation is often blocked, leading to failed transactions, user friction, and lost revenue.

- Accountability breaks down between agents and humans. When applications can’t determine who an agent represents, personalization, policy enforcement, and trust all degrade.

- Agentic browsers introduce new risks. Prompt injection and compromised agent workflows can expose sensitive data, disrupt operations, and increase overall security exposure.

Together, these challenges make it clear that existing bot defenses need to evolve—quickly.

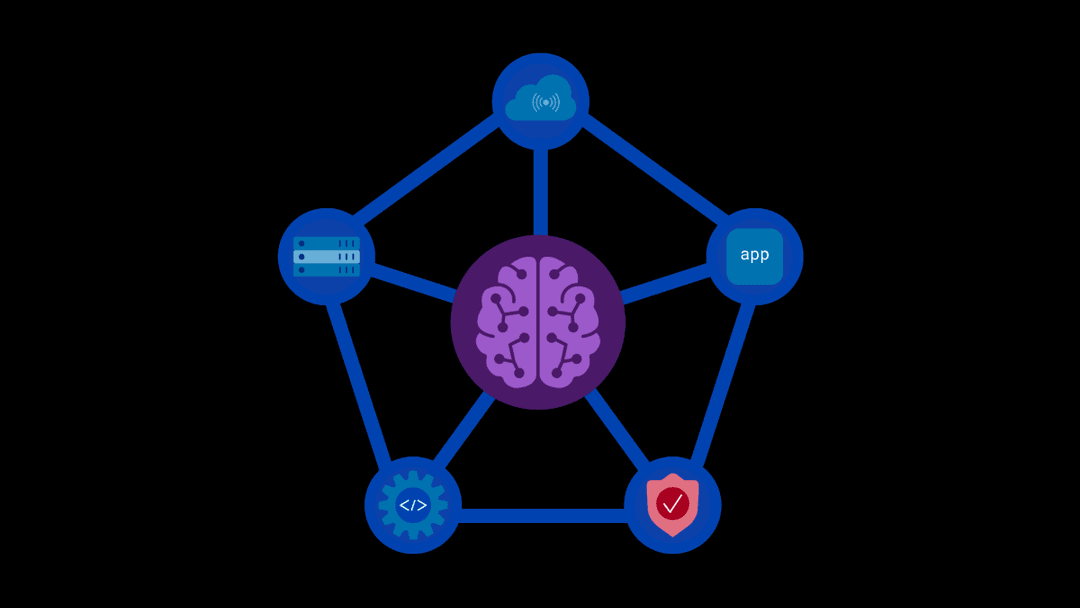

Bot defense, evolved for agentic AI

F5 Distributed Cloud Bot Defense is built to address this emerging reality. As AI agents increasingly perform meaningful tasks at machine speed, security teams need to differentiate between trusted automation and unacceptable risk, without breaking the workflows users rely on.

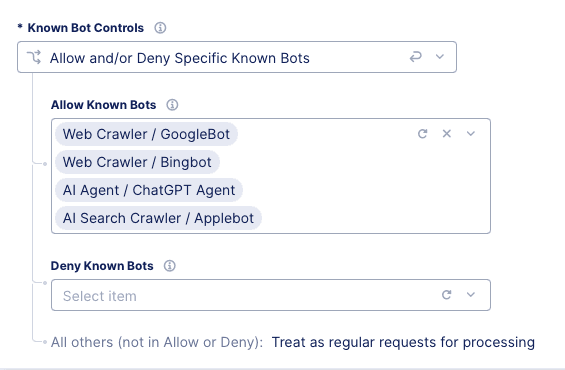

F5 supports emerging agent authentication and trust standards, such as Web Bot Auth and Know Your Agent (KYA), providing organizations with a practical path to safely enable AI-driven automation. Instead of blocking agent traffic by default, customers can explicitly approve AI agents for sensitive workflows while maintaining fine-grained control over how those agents behave.

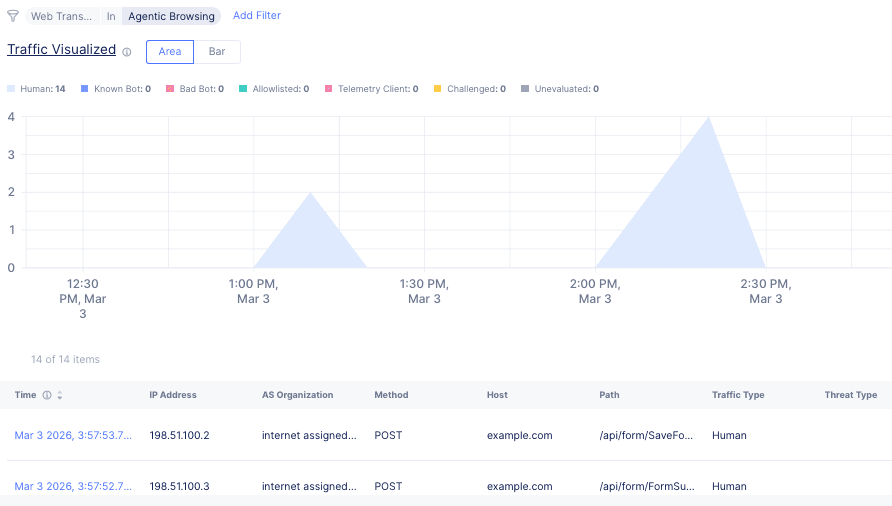

Just as importantly, F5 understands when an AI agent, not a human, is driving the browser session. Modern agents increasingly use full browsers and intentionally mimic human interaction patterns, making them difficult to detect with traditional signals. By analyzing high-confidence behavioral and interaction data, F5 Distributed Cloud Bot Defense makes it clear who is running the session. This visibility reduces blind spots and allows organizations to make better decisions as AI-driven browsing becomes commonplace.

Turning agent trust into enforceable policy

Visibility alone isn’t enough. As interaction volume grows, organizations must convert agent trust into enforceable policy.

F5 enables teams to confidently permit trusted, policy-compliant agents while applying clear boundaries that prevent misuse of sensitive workflows. Agent-aware policies account for identity, integrity, source, and declared purpose, allowing companies to regulate how AI agents interact with specific endpoints precisely. The result is trusted automation with reduced operational and security risk.

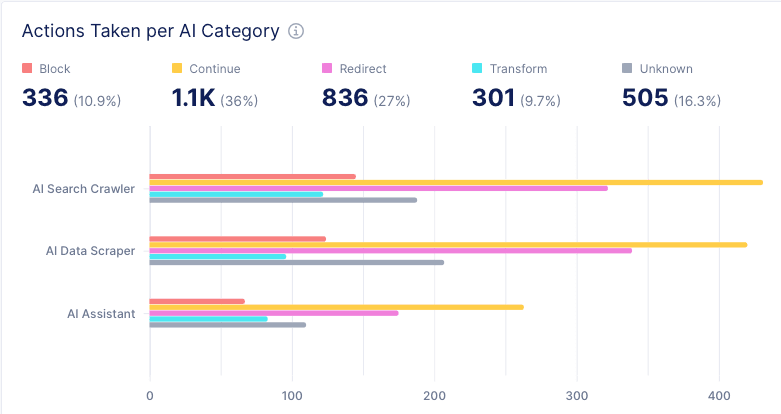

F5 also helps organizations understand the broader business impact of agent behavior. Teams gain insight into which agents access which applications, how agent activity affects conversion and fraud rates, and where risk may arise.

With shared visibility across security, fraud, and application teams, organizations can respond quickly by tightening controls, enforcing rate limits, or blocking activity when necessary.

Managing agent traffic with confidence

As AI becomes a permanent part of application traffic, confidence becomes critical. F5 Distributed Cloud Bot Defense helps organizations understand who is interacting with their applications and why. It also automatically applies the right controls and continuously verifies that agent behavior aligns with expectations.

This approach transforms agent traffic from an unknown risk into a manageable, observable part of digital operations. Instead of reacting to problems after the fact, teams gain proactive control over how automation interacts with their most valuable workflows.

AI agents are here. The question is, do you manage them or let them disrupt your business?

If you’re interested in learning more about F5 Distributed Cloud Bot Defense, please get in touch.

About the Authors

Related Blog Posts

Kubernetes-native WAF for the gateway era: F5 WAF for NGINX now integrates with F5 NGINX Gateway Fabric

F5 extends WAFs to deliver consistent, scalable protection across clusters and environments with F5 NGINX Gateway Fabric and F5 NGINX Ingress Controller.

From dashboard fatigue to operational excellence: Why XOps needs F5 Insight for ADSP

Learn how F5 Insight for ADSP lays the visibility foundation for XOps—turning fragmented signals across applications and infrastructure into actionable intelligence.

The hidden cost of unmanaged AI infrastructure

AI platforms don’t lose value because of models. They lose value because of instability. See how intelligent traffic management improves token throughput while protecting expensive GPU infrastructure.

Govern your AI present and anticipate your AI future

Learn from our field CISO, Chuck Herrin, how to prepare for the new challenge of securing AI models and agents.

F5 recognized as one of the Emerging Visionaries in the Emerging Market Quadrant of the 2025 Gartner® Innovation Guide for Generative AI Engineering

We’re excited to share that F5 has been recognized in 2025 Gartner Emerging Market Quadrant(eMQ) for Generative AI Engineering.

Self-Hosting vs. Models-as-a-Service: The Runtime Security Tradeoff

As GenAI systems continue to move from experimental pilots to enterprise-wide deployments, one architectural choice carries significant weight: how will your organization deploy runtime-based capabilities?